One of the most common problems that occur while running concurrent transactions is the Dirty Read problem. A dirty read occurs when one transaction is permitted to read data that is being modified by another transaction that is running concurrently but which has not yet committed itself.

If the transaction that modifies the data commits itself, the dirty read problem doesn’t occur. However if the transaction that modifies the data is rolled back after the other transaction has read the data, the latter transaction has dirty data that doesn’t actually exist.

As always, be sure you are well backed up before experimenting with a new code. See this article on backing up MS SQL databases if you’re not sure.

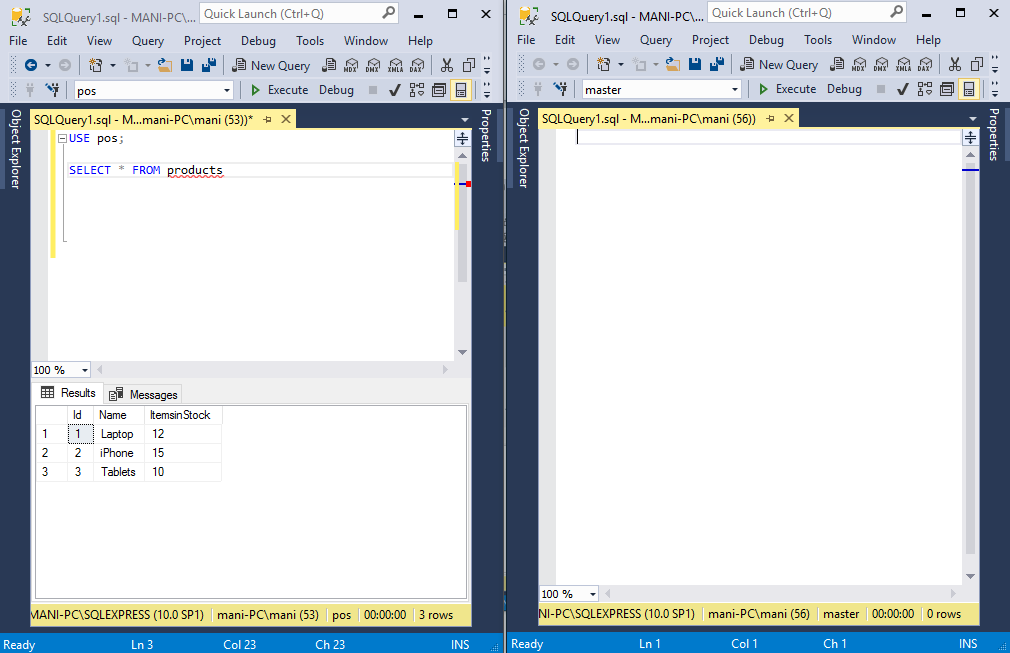

Let’s understand this with the help of an example. Suppose we have a table named ‘Product’ that stores id, name, and ItemsinStock for the product.

The table looks like this:

[table id=20 /]

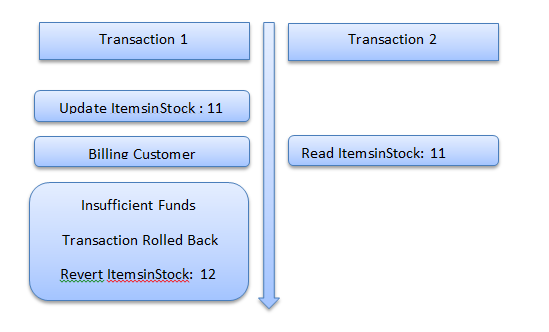

Suppose you have an online system where a user can purchase products and view products at the same time. Take a look at the following figure.

Consider a scenario where a user tries to purchase a product. Transaction 1 will perform the purchase task for the user. The first step in the transaction will be to update the ItemsinStock.

Before the transaction, there are 12 items in the stock; the transaction will update this to 11. The transaction will now communicate with an external billing gateway.

If at this point in time, another transaction, let’s say Transaction 2, reads ItemsInStock for laptops, it will read 11. However, if subsequently, the user behind Transaction 1 turns out to have insufficient funds in his account, Transaction 1 will be rolled back and the value for ItemsInStock column will revert to 12.

However, Transaction 2 has 11 as the value for ItemsInStock column. This is dirty data and the problem is called dirty read problem.

Working Example of Dirty Read Problem

Let’s us take a look at the dirty read problem in action in SQL Server. As always, first, let’s create our table and add some dummy data to it. Execute the following script on your database server.

CREATE DATABASE pos;

USE pos;

CREATE TABLE products

(

Id INT PRIMARY KEY,

Name VARCHAR(50) NOT NULL,

ItemsinStock INT NOT NULL

)

INSERT into products

VALUES

(1, 'Laptop', 12),

(2, 'iPhone', 15),

(3, 'Tablets', 10)Now, open two SQL server management studio instances side by side. We will run one transaction in each of these instances.

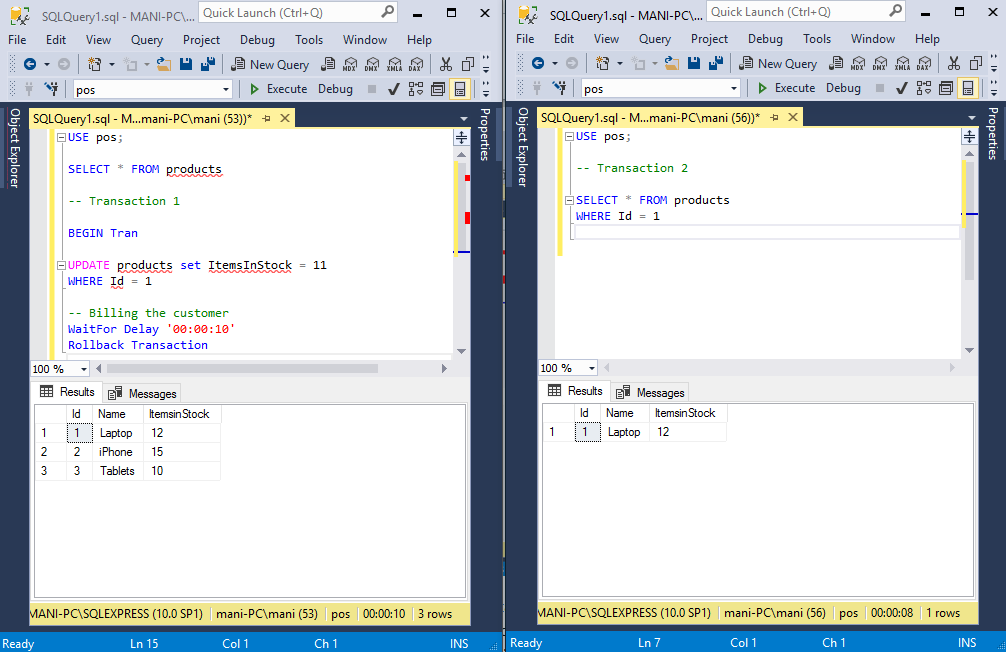

Add the following script to the first instance of SSMS.

USE pos;

SELECT * FROM products

-- Transaction 1

BEGIN Tran

UPDATE products set ItemsInStock = 11

WHERE Id = 1

-- Billing the customer

WaitFor Delay '00:00:10'

Rollback TransactionIn the above script, we start a new transaction that updates the value for the “ItemsInStock” column of the products table where Id is 1. We then simulate the delay for billing the customer by using the ‘WaitFor’ and ‘Delay’ functions. A delay of 10 seconds has been set in the script. After that, we simply roll back the transaction.

In the second instance of SSMS, we simply add following SELECT statement.

USE pos;

-- Transaction 2

SELECT * FROM products

WHERE Id = 1Now, first run the first transaction, i.e. execute the script in the first instance of SSMS, and then immediately execute the script in the second instance of the SSMS.

You will see that both transactions will keep executing for 10 seconds and after that, you will see that the value for the ‘ItemsInStock’ column for the record with Id 1 is still 12 as shown by the second transaction. Though the first transaction updated it to 11, waited for 10 seconds, and then rolled it back to 12, the value shown by the second transaction is 12 rather than 11.

What actually happened is that when we ran the first transaction, it updated the value for the ‘ItemsinStock’ column. It then waited for 10 seconds and then rolled the transaction back.

Though we started the second transaction immediately after the first, it had to wait for the first transaction to complete. That is why the second transaction also waited for 10 seconds and why the second transaction executed immediately after the first transaction completed its execution.

Read Committed Isolation Level

Why did transaction 2 have to wait for the completion of transaction 1 before it executed?

The answer is that the default isolation level between transactions is “read committed”. The Read Committed isolation level ensures that data can only be read by a transaction if it is in the committed state.

In our example, transaction 1 updated the data but it did not commit it until it was rolled back. This is why transaction 2 had to wait for transaction 1 to commit the data or rollback the transaction before it could read the data.

Now, in practical scenarios, we often have multiple transactions taking place on a single database at the same time and we do not want every transaction to have to wait for its turn. This can make databases very slow. Imagine buying something online from a large website which could only process one transaction at a time!

Reading Uncommitted Data

The answer to this problem is to allow your transactions to work with uncommitted data.

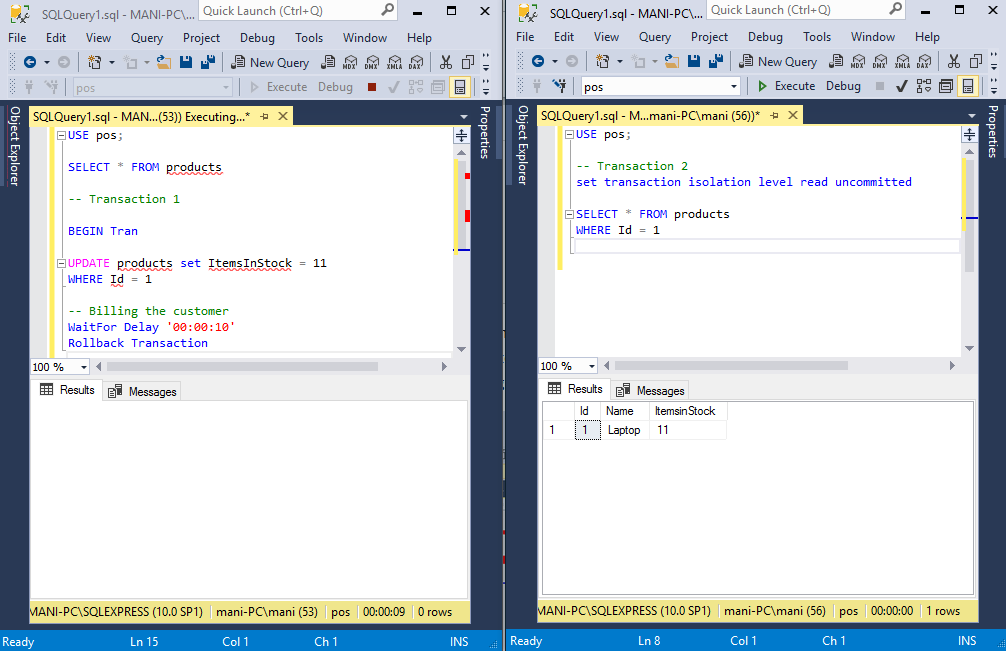

To read uncommitted data, simply set the isolation level of the transaction to “read uncommitted.” Update the transaction 2 by adding an isolation level as per the script below.

USE pos;

-- Transaction 2

set transaction isolation level read uncommitted

SELECT * FROM products

WHERE Id = 1Now if you run the transaction 1 and then immediately run transaction 2, you will see that transaction 2 will not wait for transaction 1 to commit data. Transaction 2 will immediately read the dirty data. This is shown in the following figure:

Here the instance on the left is running transaction 1 and the instance on the right is running transaction 2.

We run transaction 1 first which updates the value of “ItemsinStock” for id 1 to 11 from 12 and then waits for 10 seconds before being rolled back.

Meanwhile, transaction w reads the dirty data which is 11, as shown in the result window on the right. Because transaction 1 is rolled back this is not the actual value in the table. The actual value is 12. Try executing transaction 2 again and you will see that this time it retrieves 12.

Read uncommitted is the only isolation level that has the dirty read problem. This isolation level is least restrictive of all the isolation levels and allows reading uncommitted data.

Obviously, there are pros and cons to using Read Uncommitted it depends on what application your database is used for. Obviously, it would be a very bad idea to use this for the database behind an ATM systems and other very secure systems. However, for applications where speed is very important (running large e-commerce stores) using Read Uncommitted makes more sense.

Tags: sql server Last modified: September 23, 2021