SQL Server AlwaysOn Availability Groups is Microsoft’s latest technology for addressing the High Availability and Disaster Recovery needs of organizations using SQL Server. One big advantage of AlwaysOn is the ability to address both HA and DR in one implementation. The key benefits of AlwaysOn that we have experienced are as follows:

-

We can group related databases as part of a single Availability Group and have them failover together in case of such a need. This is especially useful for applications that depend on more than one database such as Microsoft Office SharePoint, Microsoft Lync, or Sage.

-

When compared with SQL Server Failover Cluster Instances, we find that storage as a single point of failure has been eliminated since each instance which constitutes a replica has its own storage assigned.

-

With AlwaysOn, it is possible to configure HA and DR at one time. This is achieved by creating Multi-Site Windows Failover Clusters as the basis of your AlwaysOn configuration. Performing a Role Switch when using AlwaysOn is significantly simpler than doing it when using Transaction Log Shipping.

The WSFC Dependency

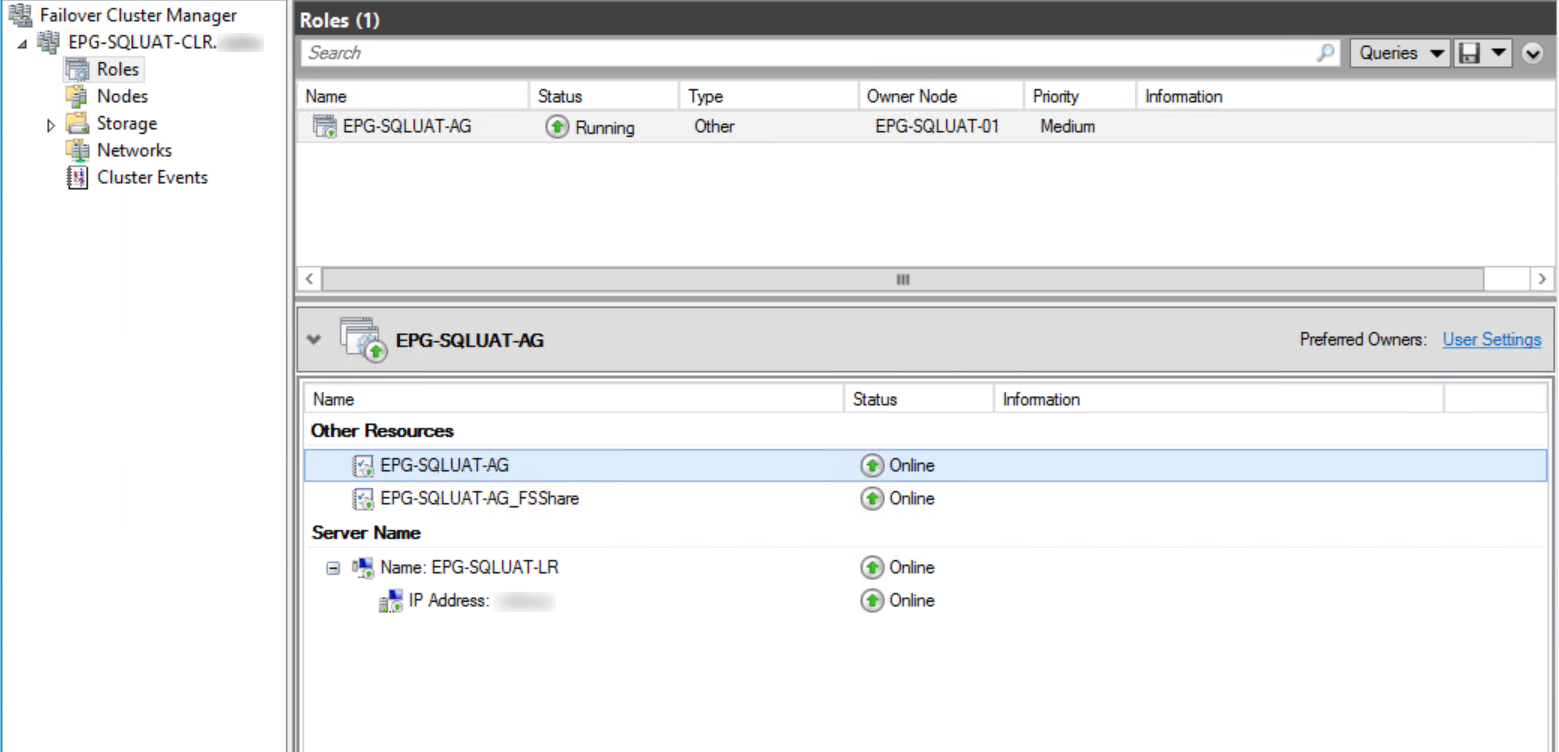

When using SQL Server AlwaysOn AG for High Availability and Disaster Recovery, first, you need to configure a Windows Failover Cluster. AlwaysOn AGs depend on WFCS to manage the AlwaysOn AG as a role which is composed of such cluster resources as the Availability Group name, the file share name, Listener name, and an IP address.

Fig. 1 AlwaysOn AG as a Cluster Resource

Quorum

Quorum is the minimum number of votes required for a majority in a Failover Cluster. Quorum determines how many node failures the cluster can sustain. Through the Private Network on port 3343, all cluster nodes communicate health status and resource monitoring information. In case of failure, the votes show which nodes have “Up” status and on which node resources must be brought online.

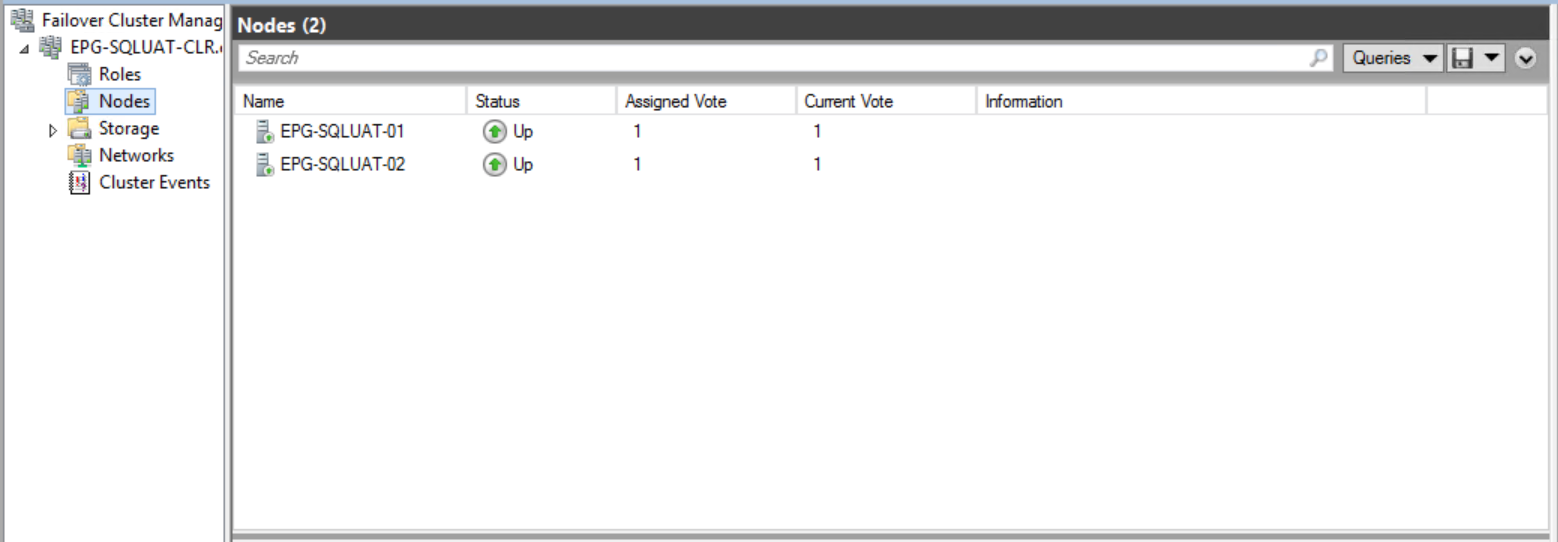

Since Windows Server 2012, the maximum number of cluster nodes supported is sixteen. However, in most environments I am familiar with, two-node clusters are common. A two-node cluster poses a small problem in terms of achieving quorum since each node has one vote and if there is a problem with communication between the two, each one may assume the other is not healthy. This is called a split-brain scenario. Split-brain scenarios are the reason for configuring a tiebreaker such as a disk or file share.

If you have an odd number of nodes, it is not necessary to configure a tiebreaker. Dynamic Quorum Configuration and Dynamic Witness were introduced in Windows Server 2012 and Windows Server 2012 R2 respectively. With the help of these technologies, Windows automatically redistributes the votes in a cluster so that the number of nodes in a cluster doesn’t matter in establishing a Quorum. A cluster node’s vote is removed by setting the Cluster Property “NodeWeight” to 0. These features are enabled by default.

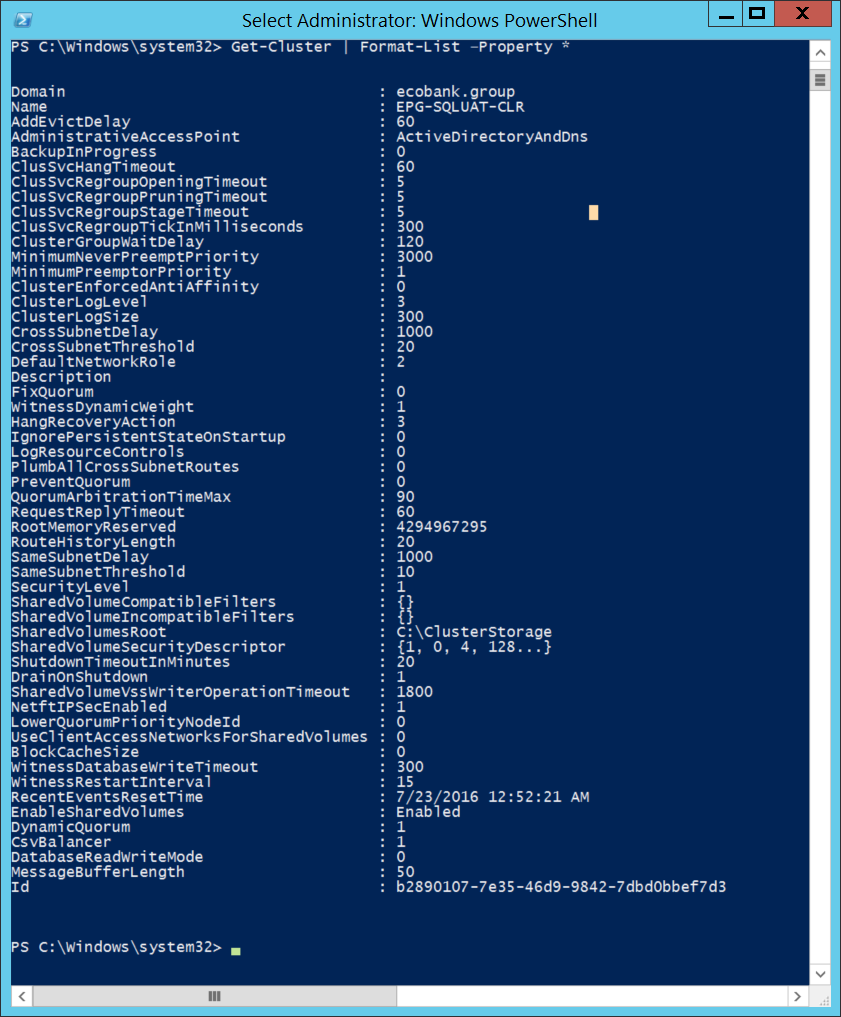

Fig. 2 Getting All Cluster Properties Using PowerShell

Fig. 3 Assigned Votes in a Two-Node Cluster

Using PowerShell

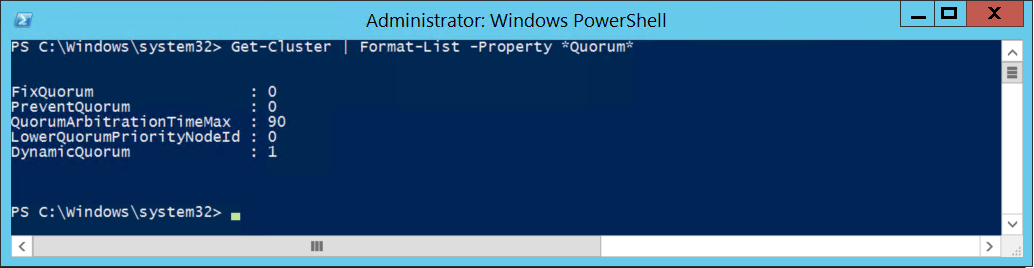

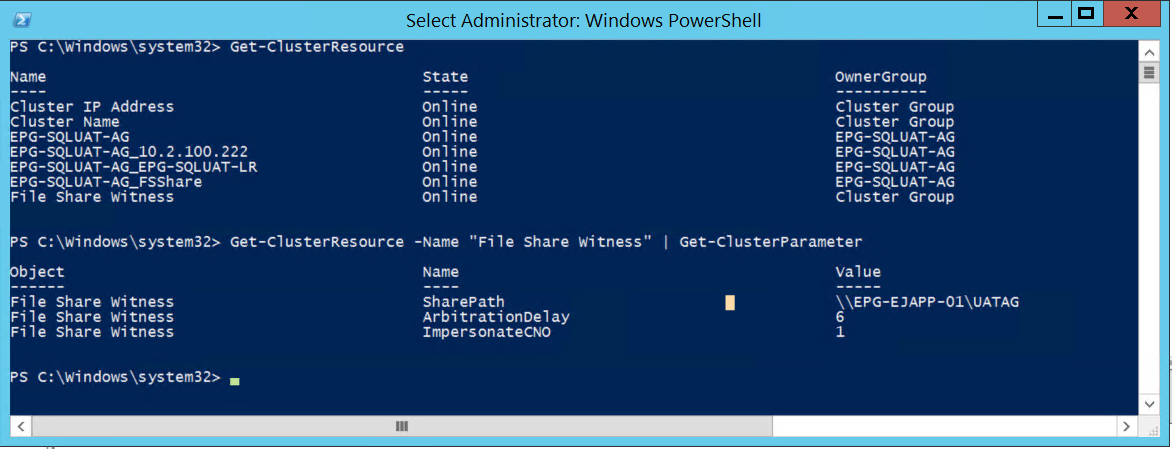

The PowerShell Command Get-Cluster can be used to check the Quorum configuration on a Windows Cluster. Fig. 4 shows how to check all Cluster properties related to Quorum on a cluster and Fig. 5 depicts the properties of the File Share Witness. There are many other PowerShell Commands to check and manage Windows Clusters.

Get-Cluster | Format-List –Property *Quorum*

Fig. 4 PowerShell Command to Check Quorum Related Properties

Get-ClusterResource

Get-ClusterResource -Name "File Share Witness" | Get-ClusterParameter

Fig. 5 PowerShell Command to Check Details of File Share Witness Properties

Quorum Modes

Windows Server Failover Cluster allows configuring up to four modes. Quorum modes are essentially options you choose to determine how the cluster will handle node failures.

1. Node Majority

This Quorum Mode can sustain failures of up to (n/2)-1 nodes. It is recommended for clusters with an odd number of nodes. For example, in a five-node cluster, it would take a failure of two nodes to cause a cluster failure.

2. Node and Disk Majority

Can sustain failures of up to half the number of cluster nodes as long as the disk witness (also called the quorum disk) remains online.

3. Node and File Share Majority

This Quorum Mode can sustain failures of up to half the number of cluster nodes as long as the file share remains accessible. As of Windows Server 2012 R2, Microsoft recommends that a witness (Disk or File Share) should always be configured when building a cluster.

4. No Majority

This is a Disk Only mode. This mode can sustain failures of all nodes except one as long as the disk is online. This mode is not recommended since the disk becomes a single point of failure.

Tips on Configuring Node and File Share Majority

AlwaysOn Availability Groups support only two of those Quorum Modes: Node Majority and Node and File Share Majority. When building an SQL Server AlwaysOn Availability Group cluster, there are a few points the DBA should keep in mind:

1. Using Physical Servers

When configuring a two-node cluster for AlwaysOn, your nodes must reside in different physical racks. The server hosting your file share must reside in a third rack.

2. Using Virtual Servers

When configuring a two-node cluster for AlwaysOn, your virtual machines must reside on separate hosts. The virtual machine hosting your file share must reside on a third host.

3. Multi-Site Clustering

When configuring a multi-node cluster for AlwaysOn across data centers, in an ideal scenario the file server hosting your file share must reside in a third data center.

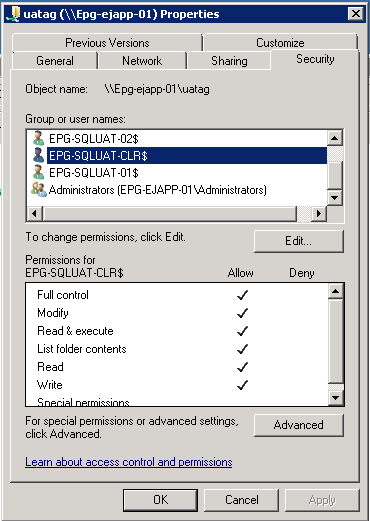

4. File Share Permissions

The Cluster Name Object should have permissions on the file share used as the Quorum Witness. Without this, you would typically experience errors in attempting to configure the Quorum Witness.

Fig. 6 Permissions on File Share

5. Online Configuration

Quorum modes can be configured while the cluster is online. So in case the file share server fails or needs to be reconfigured, ensure you quickly re-configure to guarantee there are no unexpected failures especially on a two-node cluster.

A Real-Live Use Case

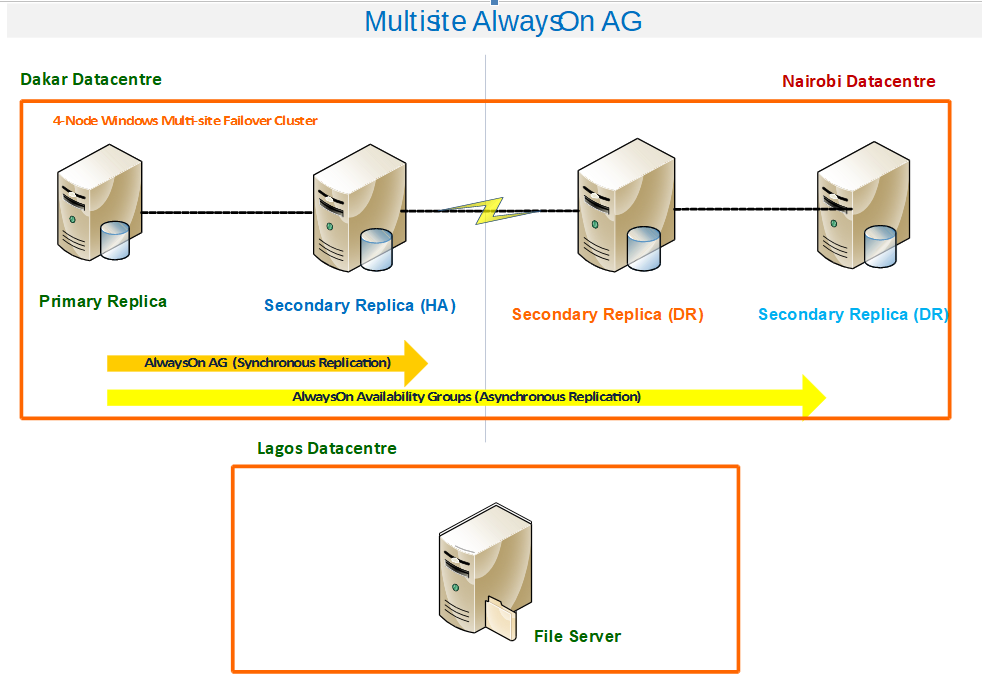

The diagram in Fig. 7 depicts a real Multi-Site AlwaysOn AG Cluster. It is a four-node cluster with two nodes on one site and two others on a remote DR site. The File Server hosting the File Share used as the tie-breaker is hosted in a third data center. In the present case, the File Server resides in the same city as the Primary Data Center but if you can afford it, it would be ideal to have the File Server in another city. The communication between the three sides must be of good quality in order to avoid false positives.

For example, in our initial implementation of this cluster, we used “Synchronous Replication with Automatic Failover” across the Live and DR sites. On more than one occasion we experienced a glitch in communication which triggered an automatic Failover to the DR site and exposed a flaw in our configuration. This caused the Listener name to resolve to the associated IP addresses in the DR site and clients could no longer connect because the communication with this new IP address was not allowed on the network firewalls. We simply failed back to the Primary Site to mitigate the problem and changed the configuration to “Asynchronous Replication with manual Failover” for nodes residing across data centers. We plan to cover the name resolution aspect in our next “AlwaysOn” article.

Fig. 7 A Real-Live Use Case

Conclusion

AlwaysOn Availability Groups feature was introduced in SQL Server 2012 and is Microsoft’s latest technology for addressing both High Availability and Disaster Recovery needs. Configuring AlwaysOn Availability Groups depend heavily on Windows Failover Cluster Service. Failover Clusters, in turn, depend much on correct quorum configuration. When building AlwaysOn on Multi-Site Clusters the latency between your nodes in the different sites and the file share used as an arbiter really matters. Ensure your quorum configuration is always in top shape to avoid unexpected behaviors with the Availability Groups.